Your Brain Is Not Keeping Up With the AI Hype Cycle (And That's a Problem)

The cognitive biases shaping how today's product teams build, decide, and burn out.

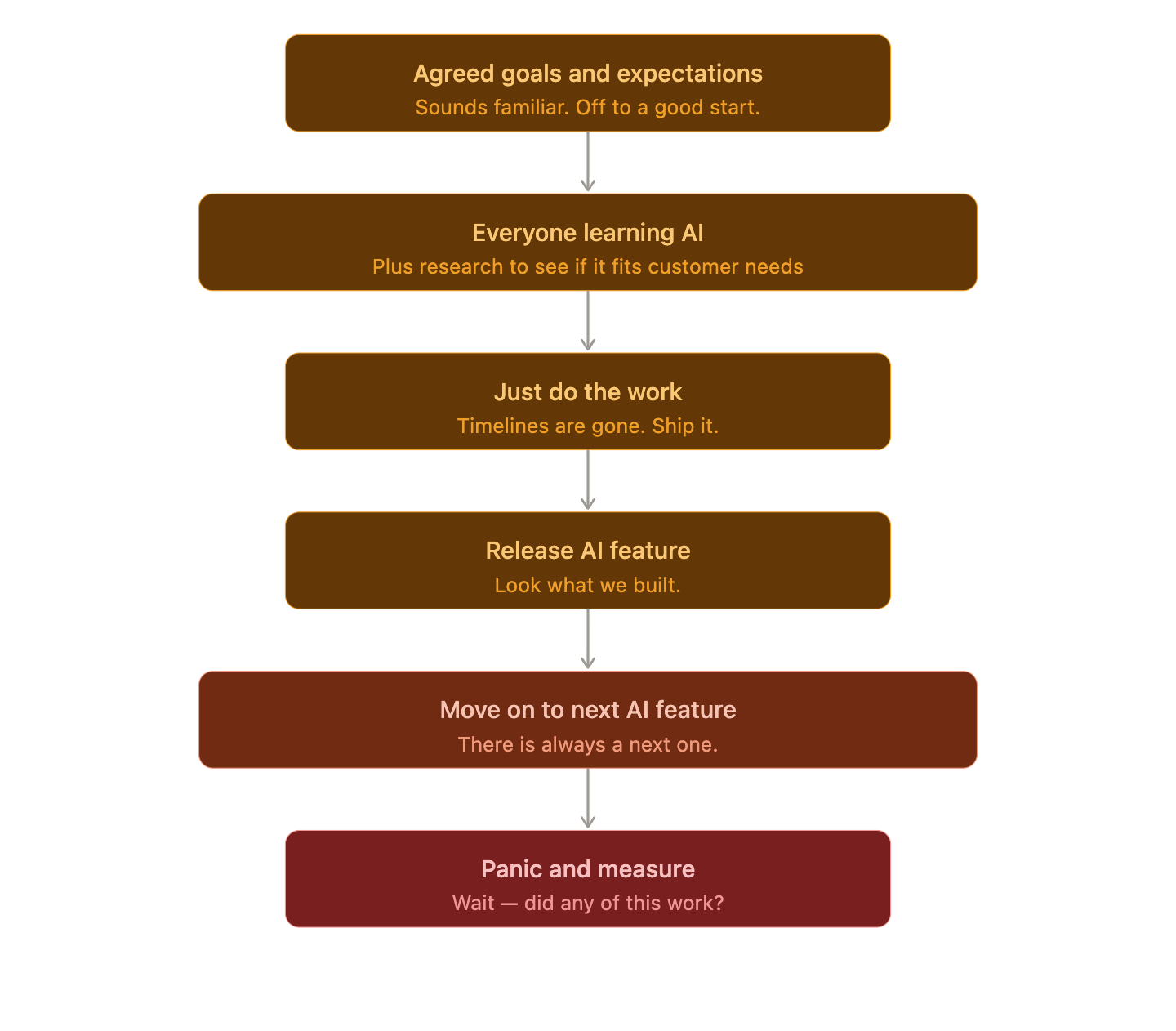

How many of you just walked out of a meeting where your team is pivoting their roadmap because of a demo someone saw two weeks ago? A competitor shipped an AI feature and it’s so cool. Someone in leadership forwarded a breathless LinkedIn post about it. And now, the last three conversations in Slack have been about what the team “needs to add” before the next sprint.

No one has looked at the mountain of customer and market data in a while, but they’re looking at specifically this point in time. This isn’t the exception anymore. It’s the norm.

We are in an era that is genuinely exciting and genuinely disorienting at the same time, and the problem isn’t technology. The problem is what the pace of that technology is doing to our decision-making. The problem is our brains.

What was the inspiration for this post? AI you say? Sorta. I bit the bullet and got my 13-year-old kid a phone. And it’s wild how when I started talking with my friends this past weekend about the impact of technology on the brains of our kids (and the contract I made my kid sign so I could help manage the cognitive changes and load with some force), it came back to what it’s doing to every one of us, in tech or not.

What a cognitive bias actually is

I fell in love with understanding this a few years ago, at Pendo, when we started looking at how it impacts product teams. A cognitive bias isn’t a character flaw. Rather, it’s a shortcut your brain takes because it was never designed to process the volume of information the modern world throws at it. The errors aren’t random - they’re actually predictable, which is both the bad news and the good news. Your brain builds a “subjective reality” from what it can access, and it acts on that reality even when the full picture looks different. If you’ve not yet read Thinking, Fast and Slow by Daniel Kahneman (and are up for a nice long but worthwhile read) you’ll get this quickly.

In product, those shortcuts have always existed. But right now, in an environment where everyone is watching what the next AI release will do to their job, their roadmap, and their relevance, those shortcuts are working overtime.

Luckily my LinkedIn Feed (and likely yours) serves up the best posts that showcase the ones that are doing the most damage in product development today. These culprits are recency, bandwagon, and confirmation biases.

Recency Bias: The Last Thing You Heard Is Running Your Roadmap

Recency bias is exactly what it sounds like. Your brain gives disproportionate weight to what just happened, and what someone just saw, at the expense of what’s been true for a longer stretch of time.

You see it in meetings when all the decisions get made in the last fifteen minutes based on whatever was said most recently. You see it in prioritization when the feature that just got the most Slack reactions ends up in the next sprint. You see it in strategy when one bad quarter or one viral competitor feature triggers a full rethink that wasn’t supported by the underlying data.

Neuroscientists at the Sainsbury Wellcome Centre and Imperial College London published research in 2024 showing that recency bias in working memory is not just a behavioral tendency. It’s actually embedded in how neural circuits function. The same mechanism that causes us to over-weigh recent information also pulls our judgment towards “the average of previous observations,” which means we’re often not making bold decisions at all. We’re regressing to the mean and calling it a pivot.

(Come on, you know you saw the scene in your head too.)

In product, this is the quiet enemy of durable strategy.

When the most recent customer complaint, competitor launch, or leadership comment overrides months of evidence, you stop building toward a goal and start reacting to a feed.

The AI moment has amplified this tenfold. Every week brings a new model, a new capability, a new think piece about what it all means. If your team is letting the most recent piece of that inform their direction, they’re not building a roadmap. They’re building a mood board.

Bandwagon Effect: AI Is Everywhere So We Must Need It Too

The bandwagon effect - the tendency to adopt something because others appear to be adopting it - is operating at a scale we haven’t seen since the mobile-first era (some of you reading this have zero idea what I’m referring to, and I’m now at peace with that).

The data tells an interesting story around this effect. FactSet tracking shows that a record 331 S&P 500 companies mentioned AI on their Q4 2025 earnings calls - 68% of all calls that quarter - with that number growing every quarter for the past two years. But a study published by the National Bureau of Economic Research surveyed thousands of CEOs and senior executives across the US, UK, Germany, and Australia and found that the majority reported little meaningful impact from AI on their actual operations. Productivity gains measured at the macro level have been modest - one MIT analysis put the productivity increase over the next decade at around 0.5% - pretty modest.

The gap between what companies are saying and what they are actually seeing is enormous. And that gap exists largely because teams are adopting AI tools to signal progress, not because they’ve tied those tools to a specific outcome they’re trying to move.

There is a deeper layer to this that I am seeing in conversations with founders and product leaders. Boards and investors are creating an impossible tension right now. They are holding companies to the same expectations around growth and retention they always have while simultaneously pressuring them to be at the forefront of AI in their portfolio. What that pressure actually demands is something most have not yet embraced, or perhaps understood: a deliberate separation between smart, focused investments in core product and genuine runway for innovation. McKinsey’s research on 20 AI-leading companies across industries found that the ones delivering real results - an average 20% EBITDA uplift and $3 of incremental EBITDA for every $1 invested - concentrated their efforts on just one to three business domains and reinvented those with AI, rather than spreading bets across the board. They made substantial, stage-gated investments and stayed focused. Protecting the core while making real room for the experiments might actually move something. That is not a radical idea. It is just one most boards are not yet having.

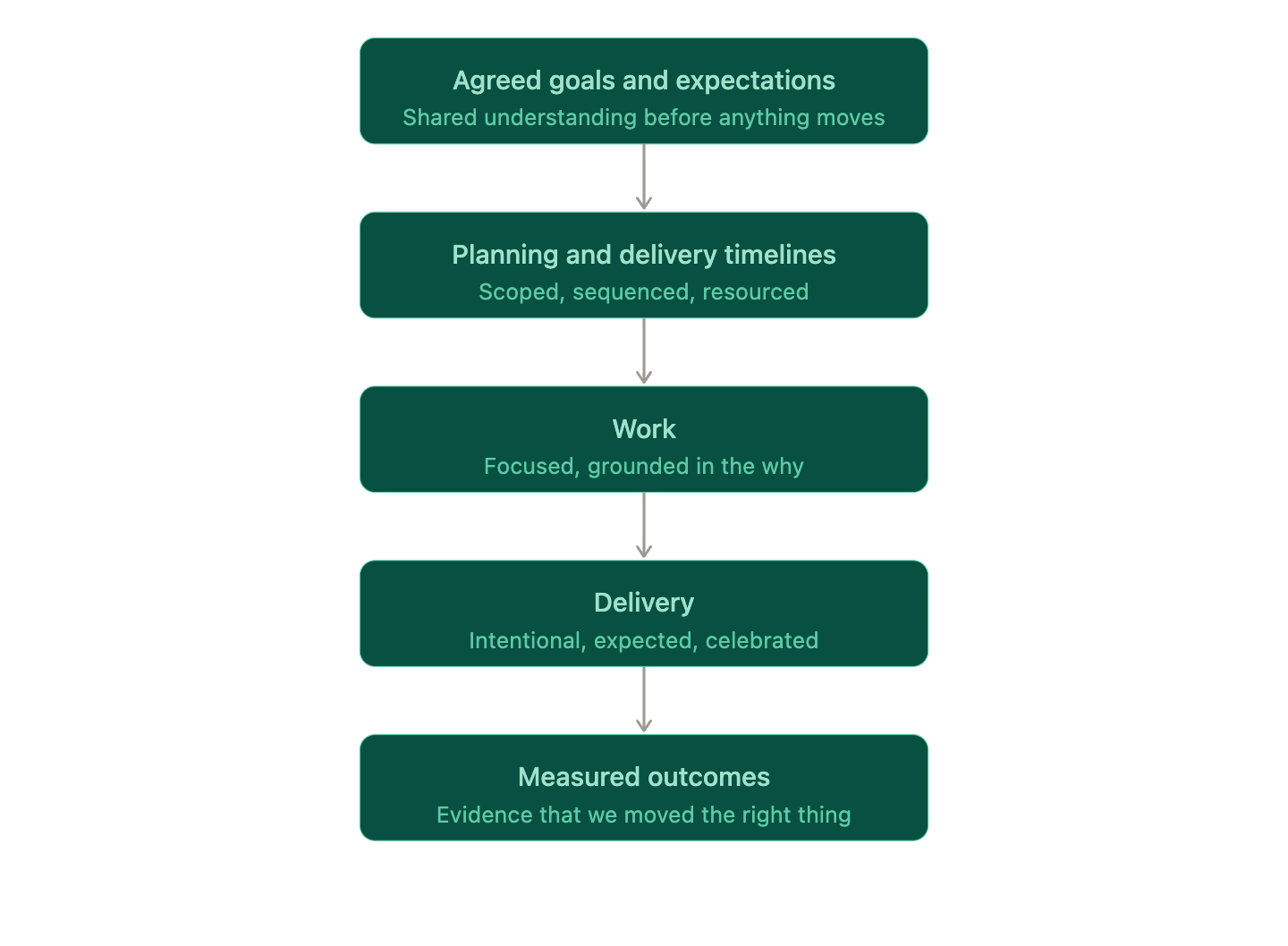

Goals set expectations and they drive the work. This is a massive change management moment for all of us. The downstream effects of this tension is something everyone should take seriously. Product operations teams in this moment can be more essential than before. When you implement tooling or redesign a workflow because “everyone is doing it,” you’ve decoupled your process from your actual problem. Some of you I’ve spoken with have completely abandoned guardrails and process because of the chaos. You’ve made it much harder to evaluate whether anything you’re doing is working, because the North Star is “keeping up” rather than “improving a metric that matters.”

Confirmation Bias: The Feature Factory Runs on This

Confirmation bias - the tendency to seek and interpret information in ways that confirm what you already believe - has always been the most common cognitive bias on product teams. Some researchers call it the “patron bias” behind feature factories, and that framing is right.

When a team has already committed to an AI roadmap, the research they run will tend to surface the evidence that supports it. When leadership has already decided that a certain metric now matters, the data presented in reviews will tend to emphasize that metric. When a PM believes a feature will land, the user testing will tend to be designed in ways that confirm, rather than challenge, that belief.

None of this is intentional. That’s the whole point. Confirmation bias operates quietly, below the level of deliberate reasoning, and it’s exceptionally difficult to catch in yourself.

In the current moment, when teams are under enormous pressure to show that their AI investments are paying off, confirmation bias is especially dangerous. An Upwork Research Institute study found that 77% of employees using AI said the tools had actually decreased their productivity and increased their workload. But when leaders are expecting a positive story, the data that surfaces in presentations often tells a different one.

Summing up the World Before vs. the World Now -

Then:

Now:

And now, the Burnout

Here’s where it gets intense for the people doing this work.

An eight-month study conducted by researchers at UC Berkeley and published in the Harvard Business Review in early 2026 followed 200 employees at a US-based tech company before and after AI tools were introduced. What they found wasn’t that AI freed people up. It was that AI allowed people to do more, and so they did - longer hours, broader scope, faster pace, often without being asked. Workload crept up quietly, and then became the new baseline.

The researchers described the cumulative effect as “fatigue, burnout, and a growing sense that work is harder to step away from.” Their conclusion: “Without intention, AI makes it easier to do more - but harder to stop.”

This is what cognitive bias looks like at the systemic level. Teams driven by recency bias chase every new capability. Teams driven by the bandwagon effect purchase tools before they’ve defined the problem. Teams driven by confirmation bias design processes that validate the urgency rather than question it. And individuals, caught in all of it, expand to fill the space AI creates - and then keep going.

The burnout isn’t from the technology. It’s from the unexamined assumptions that live under the usage, adoption, and implementation of it.

This is a Performance Problem

Before we get into what to do, it’s worth sitting with why it’s worth doing.

McKinsey, of course, has spent years studying the business impact of cognitive bias across industries, and the numbers are real. This one is a bit of a read, but it’s a good one. In an analysis of two investment funds that implemented structured debiasing practices, performance improvements of 150 to 200 basis points per year were documented - in one case generating over £200 million for unit holders. Their broader research estimated that even high-performing organizations could be leaving 100 to 300 basis points of value on the table annually due to unchecked bias in decision-making.

The biases they reported on were not the same as the ones mentioned in this piece which are relevant to what I’m seeing today in our space (and on our feeds). The article is worth a read to understand the impact of bias overall on performance and company outcomes. Investment funds are a clean case because every decision has a measurable outcome. Product decisions are messier, but the mechanism is the same: predictable patterns of biased thinking produce predictably suboptimal results. And unlike market volatility or competitive pressure, bias is something you can actually design around.

The work of managing cognitive bias isn’t a side project for when things slow down. It’s the infrastructure underneath every other thing you’re trying to build.

What to Do About It (For Yourself and Your Team)

None of this means you should slow down. It means you should be more intentional about what you’re speeding toward. I love Dave Masters’ take on this as it’s about intention.

A few things that actually work for teams:

To combat recency bias, separate the signal from the noise on a schedule. Recency bias absolutely thrives in reactive environments. One of the simplest interventions is designating a regular cadence - weekly, biweekly - where your team reviews a broader window of data before making decisions. Not just last week. Not just this sprint. The trend over three to six months.

Another is to create meeting structures that interrupt recency bias. Following on the above, consider making the first agenda item in any roadmap or prioritization meeting a review of existing evidence, not new input. When the first thing the group processes is the history of a topic, not the most recent thing that happened, you structurally reduce the weight of recency.

To combat bandwagon, name the “why” before the “what.” Before any AI tool evaluation or capability addition gets prioritized, require your team to write one sentence connecting it to a specific business outcome with a number attached. If you can’t write that sentence, you’re likely looking at bandwagon adoption, not strategic adoption.

To combat confirmation bias, build research processes that try to disprove, not confirm. Confirmation bias loses its hold when the explicit goal of a research session is to find out what’s wrong with your hypothesis, not what’s right with it. Ask your team: what would have to be true for us to be wrong about this?

Overall we should look for ways to protect decision quality, not just output. The Berkeley researchers specifically recommend what they call “decision pauses” before high-stakes choices - structured moments to slow down before committing. If your team is moving at a pace where decisions feel automatic, that’s the signal to pause, not accelerate.

And for everyone, this still holds true. AI or not -

Notice when your conviction about something increased because of a demo you saw last week. There’s a difference between being excited about something shiny (which is totally fine) vs. chasing something shiny because someone else is doing it and you feel left behind.

Notice when urgency feels like it’s coming from the environment rather than from your own strategic assessment.

Notice when the last most important thing is no longer the most important, even though it was grounded in data and had a clear line to achieving a business outcome. Those shifts are not as simple as reordering priorities - they’re about learning how to accept change will have ripple effects. I’ve always told my teams the dynamics, prioritization culture, and resources at one organization are not the same as another. So the thing you are chasing is a thing that will likely need to be done differently than in that other company, and will almost always impact existing agreed upon outcomes.

Pausing to notice is the practice.

The Longer View

The irony of this moment is that the teams who will build the most durable products are probably not the ones moving fastest. They’re the ones who are clearest about what they’re actually trying to accomplish and why, and setting up mechanisms to measure success before moving on. They’re the ones who have built the discipline to keep that clarity even when the hype cycle is deafening.

Cognitive biases don’t go away. They’re part of how your brain works, and that won’t change regardless of how sophisticated the tools around you get. What changes is whether you’ve built the awareness (the noticing) and the systems to catch them before they make decisions for you.

Your roadmap deserves better than your most recent Slack thread. Your team deserves better than keeping up. And you deserve to be building toward something that still makes sense when the current hype cycle has passed.

Just like that contract I made for my kid, find ways to focus on keeping the noise out.